YouTube’s status as the most popular video sharing platform means that it is especially useful to political extremists in their efforts to influence a wide audience. We often see links to associated videos and channels on YouTube being propagated on other social media platforms such as Twitter. In this analysis, we were specifically interested in the extreme right (ER) video content that can be found there, with some of this being available for several years.

YouTube also provides recommendation services, where sets of related and recommended videos are presented to users, based on factors such as co-visitation count and prior viewing history. From an initial manual analysis of YouTube links posted on Twitter by accounts that we tracked for a number of months (these accounts were also used in a previous work), we found that users viewing an ER video or channel were highly likely to be recommended further ER content.

For example, here is a YouTube video that was tweeted by one of the Twitter accounts in the data sets we used (screenshots were captured due to the fact that this material is occasionally removed; these screenshots were created on 21 November 2014):

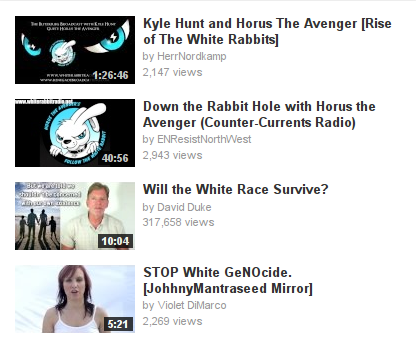

The top four videos recommended by YouTube all appear to be related to white supremacism, including one uploaded by David Duke:

Having selected this video, we see that it has been available since 2010 and has received over 300,000 views so far:

Our main objectives were to analyze the nature of this content, and to demonstrate the potential consequences of online recommender systems, particularly the narrowing of the range of content to which users are exposed. The focus here was on one specific political context, namely, the ER. The data sets consisted of YouTube links found in two sets of English-language and German-language tweets that were collected between June 2012 and May 2013. For the videos uploaded by YouTube channels identified in these tweets, we retrieved the suggestions returned by YouTube’s related video service. All available uploader-specified text metadata was retrieved for both the tweeted channel videos and those of the suggested related channels. Each individual channel was then represented as a text “document”, consisting of an aggregation of their corresponding uploaded video text metadata. The following video description demonstrates the potentially rich nature of this metadata (video is no longer available):

ASIA FOR THE ASIANS, AFRICA FOR THE AFRICANS, WHITE COUNTRIES FOR EVERYBODY!

Everybody says there is this RACE problem. Everybody says this RACE problem will be solved when the third world pours into EVERY white country and ONLY into white countries.

The Netherlands and Belgium are just as crowded as Japan or Taiwan, but nobody says Japan or Taiwan will solve this RACE problem by bringing in millions of third worlders and quote assimilating unquote with them.

Everybody says the final solution to this RACE problem is for EVERY white country and ONLY white countries to “assimilate,”, i.e., intermarry, with all those non-whites.

What if I said there was this RACE problem and this RACE problem would be solved only if hundreds of muillions of non-blacks were brought into EVERY black country and ONLY into black countries?

How long would it take anyone to realize I’m not talking about a RACE problem. I am talking about the final solution to the BLACK problem?

And how long would it take any sane black man to notice this and what kind of psycho black man wouldn’t object to this?

But if I tell that obvious truth about the ongoing program of genocide against my race, the white race, Liberals and respectable conservatives agree that I am a naziwhowantstokillsixmillionjews.

They say they are anti-racist. What they are is anti-white.

Anti-racist is a code word for anti-white.

There have been a number of other works that have been concerned with the categorization of video content on YouTube. However, as these have generally been focused on mainstream content, the categories used were not suitable for ER content, where we required something more specific. Although a number of researchers have suggested various categories to characterize online ER activity, it appears that a single definitive set of categories is not available. We therefore proposed a categorization based on various schema found in a selection of academic literature on the extreme right, where this category selection is particularly suited to the ER videos and channels we found on YouTube. Some categories are clearly delineated while others are less distinct, reflecting the complicated ideology and fragmented nature of associated groups and sub-groups. The eleven categories used were:

- Anti-Islam

- Anti-Semitic

- Conspiracy Theory

- Music

- Neo-Nazi

- Patriot

- Political Party

- Populist

- Revisionist

- Street Movement

- White Nationalist

As both data sets contained various non-ER channels that appeared in the original tweets, we also created a corresponding set of essentially mainstream categories consisting of a selection of the general YouTube categories (as of June 2013), in addition to other categories that we deemed appropriate following an inspection of this content:

- Entertainment

- Gaming

- Military

- Music

- News & Current Affairs

- Politics

- Religion

- Science & Education

- Sport

- Television

Having represented each channel as a document using its corresponding uploaded video text metadata, they were then categorized using a method known as topic modeling. This is concerned with the discovery of latent semantic structure or topics within a set of documents, which can be derived from co-occurrences of words in documents. Although latent Dirichlet Allocation (LDA) is commonly employed for this purpose, we found the most readily interpretable results to be generated by Non-negative Matrix Factorization (NMF), similar to our findings in a previous work. The categories listed above were assigned to the discovered topics, which then permitted the categorization of the channels based on their topic associations. Multiple categories were assigned to topics where necessary, as using a single category per topic would have been too restrictive.

Separately, YouTube also annotates videos with topics found in the Freebase knowledge graph, based on the supplied text metadata and audio-visual features of the videos (it should be mentioned that not all videos have such annotations). We used this resource to validate the reliability of the topic categorization, where we generated a ranking of Freebase topics for each of the NMF topics discovered, using the Freebase English-language topic labels assigned to the channels that were closely associated with the NMF topic. The following examples from both data sets demonstrate the reliability of the categorization.

English-language dataset NMF topics:

| Channels | Category | Top 10 Freebase topics |

|---|---|---|

| 257 | Anti-Islam, Street Movement | English Defence League, Tommy Robinson, Unite Against Fascism, Muslim, Islam, Luton, British National Party, Leicester, Sharia, Combat |

| 220 | Music | Oi!, Punk rock, Skinhead, Concert, Rock music, Rock Against Communism, Alternative rock, Heavy metal, Hardcore punk, Theme music |

German-language dataset NMF topics:

| Channels | Category | Top 10 Freebase topics |

|---|---|---|

| 19 | Music, Neo-Nazi, White Nationalist | Frank Rennicke, National Democratic Party of Germany, Landser, Hassgesang, Music, Division Germania, Die Lunikoff Verschwörung, Unseren Toten, Horst Mahler, Projekt Aaskereia |

| 99 | Music | National Democratic Party of Germany, Udo Pastörs, Dresden, Berlin, Anti-Fascist Action, Holger Apfel, Jürgen Rieger, The Left, German People’s Union, Frank Rennicke |

We then proceeded to investigate the potential for YouTube’s recommendation system to generate an ideological bubble, in this context, associated with the ER channels propagated on Twitter. As prior work has shown that the position of videos in the related lists suggested by YouTube plays a critical role in the click through rate, we investigated the categories of the top k channels related to the tweeted channels, for increasing values of k up to 10. We considered an ideological bubble to exist for a particular ER category, associated with a set of tweeted channels, when its highest ranking related channel categories were also ER categories. From inspecting the generated category plots, two observations can be made:

- the tweeted channel category is the dominant related category for all values of k, and,

- although related category diversity increases at lower k rankings with the introduction of certain non-ER categories, ER categories are consistently present.

In the case of ER related categories, the relationship to the particular tweeted ER category appeared to make sense in many cases, for example, the observed relationship between Street Movement or Political Party with Anti-Islam, or White Nationalist with Neo-Nazi. The appearance of non-ER related categories can also be observed for ER Music, which is logical given that someone who is a fan of ER Music is likely to listen to other, non-ER, music.

Whereas previous studies in this area have highlighted the online ideological bubbles or echo chambers resulting from choices made by content consumers (e.g. some of the discussion in Pariser’s “Filter Bubble”, or the work of Cass Sunstein), this article is concerned with content recommenders, in terms of the video and channel links suggested by YouTube. For more details of the results described above, along with additional discussion, please see the full article.

Derek O’Callaghan is a postdoctoral researcher with VOX-Pol. His research is concerned with online extremist activity, particularly that occurring on social media platforms, with a focus on social network analysis and text mining.