By Alexander Ritzmann

The Propaganda Process

Is online propaganda really effective? How can it be countered? And what can practitioners of Preventing Violent Extremism (PVE) and policymakers learn from the research findings of other relevant disciplines, such as anthropology, psychology and neuroscience?

Propaganda, understood here as the strategic communication of ideas aiming at manipulating specific target audiences for an extremist cause, generally has three main components. First, it provides a diagnosis of “what is wrong”. Secondly, a prognosis of “what needs to be done”. Thirdly, a rationale – “who should do it and why” (Wilson 1973).

The self-proclaimed Islamic State (IS), for example, claims that Islam and Sunni Muslims are under attack (diagnosis), that a Caliphate needs to be created (prognosis), and that YOU need to help in any way you can (rationale).

Right wing movements use the same approach. In their diagnosis, migration and corrupt elites are a threat to the white or national identity. The prognosis is that only homogeneous societies with high walls can ensure survival. Then they ask their target audiences to join the fight in any way they can.

Strong psychological draws are part of the process. Those who do join extremist groups often project the fulfillment of their emotional needs onto these groups. Young men seeking adventure and social standing are promised a future as heroes fighting for a just cause. Women are promised an important role as the wives of the “lions of the Caliphate”, securing its future by raising their “cubs”, or, on the right, as the “mothers of the nation”, safeguarding the future of the white race.

Propaganda Is a Tribal Call to Arms

What exactly is the role of propaganda in this context? Social psychology research findings indicate that propaganda is a “tribal” call, aiming to establish clear red lines between “us” and “them”. This call appeals to one of our most basic instincts – belonging to a group.

Psychological experiments on “social identity”, replicated over decades, show that humans form groups very easily (Ratner 2012). For our in-group to feel real, we need an out-group that is different and less attractive to us. In a state of competitiveness, over resources or the moral high ground, we then easily dismiss the “others” as being wrong, stupid, violent or dangerous.

This is what we see in extremist calls to arms. Extremist propaganda claims that competition is plainly evident, that the threat from the “others” is real, and that you need to act if you want to be a good member of that group.

The Will to Fight and Die

But why would anyone act? Simply put, because it feels good. The research indicates that joining a group that claims moral superiority lifts us up as individuals and heightens our self-worth because we can look down on others.

We have a psychological need for both inclusion and exclusion. So, we need to feel that we are part of something, but we also need to feel that the group is not open to everyone in order for us to feel important ourselves (Brewer 2001).

This “tribal” dynamic is present in our daily life. It drives diehard football fans, engaged members of religious denominations, nationalists, communists, or indeed any other group that claims to be “better” than all the others (Sunstein 1999). Wanting to join such a group seems to be a part of our basic programming, more nature than nurture.

Anthropological research indicates that there are different forms of engagement with this group identity. The closer our core values and morals are to those of our chosen in-group, the harder it is for us to change our minds. This factor is especially strong if the individual and group values and morals become identical, or “fused”. Those who have developed a “fused” identity are often willing to fight and die for their group and seem much more driven by morals, identity and ideas than kinship or material rewards (Atran 2016).

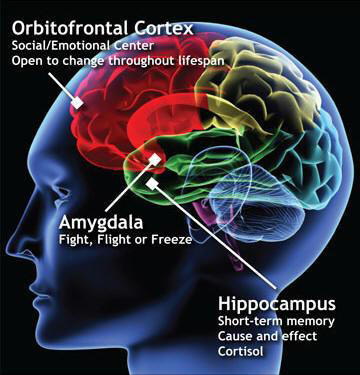

Neuroscientific experiments have shown that if someone’s core beliefs and identity are threatened by conflicting information, be it factual or emotional, the amygdala, the fight / flight / freeze part of our brains, takes control (Kaplan 2016). Put differently, the same part of our brain that makes decisions if we encounter a grizzly bear in the woods is in charge when someone tries to convince us that we are all wrong and need to let go of our group identity. This indicates that for the extremist, even a well-intended intervention by a PVE practitioner might be perceived as a serious threat to the individual’s identity and wellbeing.

There are many theories why we are programmed to cling to our core values and in-groups. For example, some highlight that in the history of humanity, those who stayed within their group were much more likely to survive than those who wandered off and met a grizzly bear all by themselves.

Filter Bubbles Protect Us from Manipulation

Extremist ideas are present in our daily life, be it online through social media, in reports by the mainstream media, or in our “offline” social circles. Specific propaganda is often easily accessible. Yet few actually heed the call of extremists to join and fight with them. Why is that the case?

The mainstream discourse on the negative social and political effects of online “filter bubbles”, referring to algorithms that pre-select information based on our previous interests, often misses the point that the “offline filter bubbles” in our mind actually protect us. They are an important part of our individual defense mechanisms against unwanted manipulation (Kahan 2016).

An interesting set of biological algorithms, often referred to “motivated reasoning”, comes into play here. “Motivated reasoning” describes a set of biases and mental shortcuts (heuristics) that defend us against switching groups and allegiances every time we see a well-crafted propaganda clip (Epley 2016). Subconscious functions of the mind, such as confirmation bias and active information avoidance, pre-select information that confirms existing beliefs and prefer it to new information challenging our beliefs (Kolbert 2017).

That said, following the logic of the “information deficit theory”, we do sometimes update our knowledge or beliefs if we learn something new. However, as soon as new information challenges our core values, our very identity and our belonging to an in-group, our biological algorithms automatically dismiss it. Humans value being a good member of their “tribe” much more than being correct. We will choose to be wrong if it keeps us in good standing with our group.

It Takes Two to Tango

So how can propaganda overcome our natural defense mechanisms? It often needs, at least in the beginning, the cooperation of the targeted individual. For adults to adopt an extremist ideology, a cognitive opening – a desire to change oneself – is necessary. This desire to change is often driven by a personal crisis. This means that it is hard for us to change our opinions or beliefs fundamentally, and it is even harder for someone else to do this against our will.

Radicalization, in many cases, can be understood like tango, where one dancing partner is leading and the other has to be willing to “dance along”. In some cases, the desire to find a new identity and in-group is so strong that self-recruiters lead the way, projecting the fulfilment of their needs onto an extremist group. In most cases, a trusted person provides the necessary “credibility” that makes it easier for the individual to accept manipulated information. This “trusted messenger” could be a family member, a friend or a charismatic recruiter (Vidino 2017).

Young people are most at risk. They are in a developmental phase, curious, and looking for answers and a place in society. This can make them vulnerable to manipulation. Their defensive “filter bubbles” are not yet fully developed.

“Echo chambers”, referring to environments that pre-select only likeminded ideas and opinions, are surely not a new phenomenon (Garret 2013). They are part of our cognitive programming and our quest for a strong group identity. The power of modern social media, however, has allowed humans to signal their “tribal” loyalties on a scale that was impossible in the past. The so called “mere-exposure effect”, which leads us to like and believe what we most often see and hear, is being strengthened and this amplifies the negative effects “echo chambers” can have.

How Is This Relevant for PVE Practitioners and Policymakers?

To prepare the population for this growing challenge, the critical media literacy of the population needs be increased dramatically. In particular, youths in schools need to be taught how to evaluate and qualify sources of information and how to identify manipulation. This should be seen as a part of “democracy training”, with the concept of critical thinking applied in daily life (RAN EDU 2018).

As shown above, the good news is that propaganda rarely works on those who don’t have a drastic need to change their social identity and in-group. The bad news is that the same conditions limit the effectiveness of counter-narratives.

The term “best practice” is widely used in the PVE field. Traditionally, it refers to a professionally evaluated and peer-reviewed practice that could serve as a standard for everyone. However, most narrative or strategic communication campaigns, like most PVE activities in general, don’t match this definition.

The Radicalisation Awareness Network´s “Communication and Narratives” working group, which aims at exchanging experiences between practitioners to shorten learning curves, has collected some key lessons learned from relevant research and inspiring practices that might be useful when aiming to change someone’s mind (RAN C&N 2018):

- Do no harm: don´t spread extremist propaganda by trying to prevent extremism. Studies indicate that raising young people’s awareness of an issue which authorities disapprove of may actually stimulate interest in the issue rather than dissuade them (Hornik 2008, Chan 2017). Therefore, highlighting the danger of specific extremist or terrorist groups can prove counterproductive and should only be done in an educational setting (Cook 2011). Counter-narratives in particular should only target a well-defined and understood audience that is already curious about extremist content.

- Avoid stigmatization: when trying to increase the resilience of a specific target audience against extremist propaganda and recruitment, be aware that you might be perceived as stereotyping and mistrusting this group. Ensure you have a good understanding of the sensitivities and concerns of your target audience, so as not to foster polarization.

- Don’t be confrontational: the more radicalized your audience is, and the more their individual identity, morals and sacred values are “fused” with an extremist ideology or group, the less effective a confrontational approach will be (Atran 2016).

- Use an indirect approach: alternative or counter-narratives are more likely to resonate with such an audience if you take an indirect approach, for example utilizing surreal contexts like those of science fiction, adventure, or mystery (Green 2017). Since this does not feel like an attack on their core values and identity, they might remain open-minded to alternative information (Kaplan 2016).

- Highlight shared values: many conflicts are based on differing moral preferences rather than detailed political or religious issues. If this is the case, consider reframing your message so it connects with the moral foundations of the targeted audience. For example, highlight shared values (Feinberg 2015) such as justice, equality and tradition, and use these as a bridge to connect opposing camps.

- Introduce new information and mental models: people are less likely to accept the debunking of old or false beliefs when they are merely labelled as wrong. Instead, they should be countered with new evidence. The new messages should also offer a new model for understanding the information. Elaboration and discourse increase the likelihood of replacing the old or false model with a new one (Cook 2011).

- Quantity matters: a regular stream of messages has a higher chance of success. Research indicates that the share of alternative and counter-messages in a person’s information stream or “echo chamber” needs to reach around 30% to help change his or her mind (Cook 2011).

How can these findings be implemented most effectively?

The credibility of the messenger presenting any kind of communication is determined by the targeted audience. Working with local partners and empowering local initiatives is therefore more likely to achieve the desired effects than well-intended interventions from outside.

Alternative narratives, which aim to promote positive messages, universal values, role models or other kinds of information relevant to a specific part of the population should be supported much more strategically.

And finally, extremist propaganda that promotes illegal content needs to be disrupted and taken down from online media much faster. This kind of illegal content often goes viral within 60 minutes, so artificial intelligence needs to be applied.

Technology can certainly help. Systems such as eGLYPH, which can automatically detect and delete content that has been flagged before, are important tools to implement the rule of law in the online sphere.

That said, to protect free speech, the application of algorithms by governments and companies needs to be transparent and limited to the worst of the worst, focusing on clearly illegal content. Ultimately, the debate about which ideas and arguments are within open democratic discourse cannot be delegated to technology.

Alexander Ritzmann is Co-Chair of the Radicalisation Awareness Network´s (RAN)“Communication and Narratives (C&N) Working Group and an Advisor to the Counter Extremism Project (CEP), which is promoting eGLYPH. You can follow him on Twitter: @alexRitzmann

This article was originally published on European Eye on Radicalization website. Republished here with the permission from the author.